Even better performance from WP Super Cache

In a previous post, we talked about how increasing the WP Super Cache “Expire time” from 1 hour to 48 hours can help the performance of WordPress blogs.

Here’s another tip that can help dramatically: Remove “bot”, “ia_archive”, “slurp”, “crawl”, “spider” and “Yandex” from the Rejected User Agents box in the WP Super Cache plugin settings. (In most cases, this will leave the box completely empty.)

Those “Rejected User Agents” prevent cached pages from being created when a search engine “visits” your site. The FAQ says this is because there’s no point creating a cached file for a page that isn’t popular, which may be true for sites that don’t have many posts and aren’t that busy. The author of the plugin is wisely being conservative to avoid problems on hosting companies that use “NFS” disks where saving a cached file can be slow.

We don’t use NFS like that, though. And on a busy site with lots of archived posts, there’s a very good chance that a page that’s indexed by a search engine will be reindexed or viewed several times within the next couple of days. Allowing these pages to be cached can make a big difference. Besides, there’s no good reason not to cache a copy of the page, since you should be sending the same page to search engines as you do to actual users.

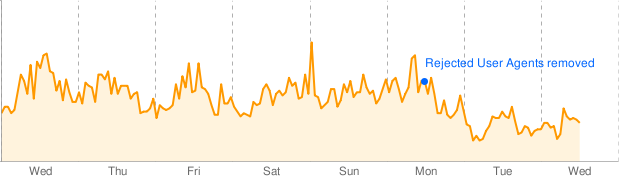

We removed those “Rejected User Agents” on a busy site with several thousand archived posts, with the “Expire time” set to 48 hours (172800 seconds) as we recommend, and here’s what happened to that site’s CPU usage:

As you can see, the average load dropped by almost half as more and more pages were cached. That’s another way of saying that the average page loading speed almost doubled. Those are pretty good results for a change that took just a few seconds to make.

on Tuesday, November 2, 2010 at 1:43 am (Pacific) Siddanth wrote:

Thanks a lot for the info my webhost blocked and a gave a 403 error on the site as i had set the expiry time to 3600 and i have a lot of posts.Now i have removed the rejected agents and increased the expiry time to 48 hours 😉

on Tuesday, April 3, 2012 at 12:36 pm (Pacific) ccranford wrote:

While it may seem like a no brainer to enable this option, please keep in mind this was placed here on purpose and for a specific reason to keep file management low. When your site has a large amount of content ( a few thousand posts, tags etc ), this option starts to become a detriment more than a performance increase. When you have literally millions of inodes being created because of disabling this option, your CPU usage will increase dramatically because of timestamp checking per page. Lowering your expire time or leaving these variables in place may be more ideal for sites with thousands of posts if you wish to maintain performance.

on Wednesday, April 4, 2012 at 4:52 pm (Pacific) Ken wrote:

ccranford, be sure that you’ve also read the prior post that is mentioned and linked to at the start of this post. That prior post discusses the fact that a low expiration time (eg, 3,600 seconds) is appropriate on servers that use NFS, because NFS makes is relatively slow to check file timestamps so keeping the cache (relatively) empty is a good idea. But our servers don’t use NFS for this. We can check the file time very quickly, so there’s no performance problem with keeping files in the cache much longer (eg, 172,800 seconds) for most sites.

Of course, customers can always experiment with different settings, and then view their account usage via our control panel.

on Thursday, July 4, 2013 at 8:45 pm (Pacific) Tech Blog Guy wrote:

Thanks for this post! I optimized my site and Adsense was still complaining about performance. It was driving me nuts. Guess I’ll see if things improve.